I sat down with Better Stack’s podcast to discuss the AI velocity -> operational chaos thesis, my time at Meta, my SRE career in general, and a few tales from my life on the road!

Thanks Better Stack for an action-packed conversation!

I sat down with Better Stack’s podcast to discuss the AI velocity -> operational chaos thesis, my time at Meta, my SRE career in general, and a few tales from my life on the road!

Thanks Better Stack for an action-packed conversation!

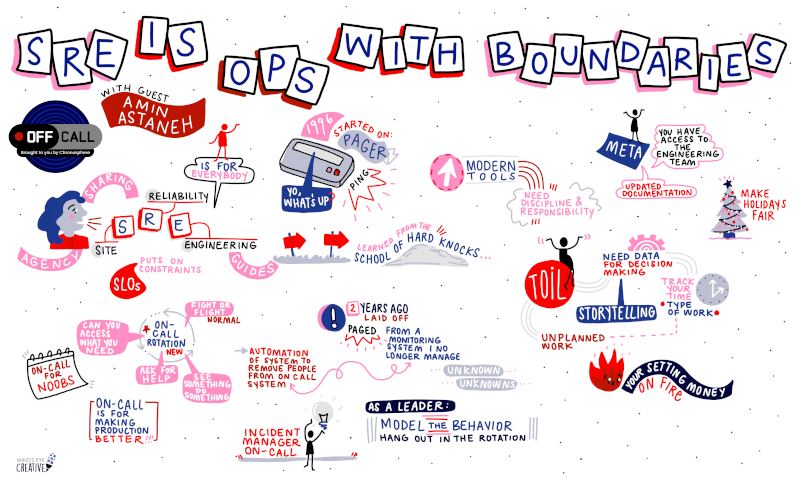

Join fellow SRE Paige Cruz and yours truly on an exploration of my history being on-call, using multiple generations of observability tools, and how to make the experience as painless as possible.

One of the major points of the discussion is how SRE sets boundaries around taking on-call burden on behalf of engineering teams in contrast to classic IT Operations teams.

I also share a funny story about the last page I received while...

2024 was a very eventful year in the tech industry, with Certo Modo being no exception to the rule.

The year started strong, with a full docket of engagements carried over from 2023. Many of these clients were former colleagues from my corporate days who needed a hand with culture, leadership and technical matters. Eventually, though, that stream of work tapered off. The major challenges my clients faced had been resolved, leaving them equipped to...

I’m pleased to announce that I’ve launched a new podcast: Reliability Rebels!

Over the past couple years, I’ve had the privilege to be a guest on several tech podcasts (and will continue to do so), however I decided to create my own.

(And yes, I produced the intro music!)

I wanted to explore how people in tech sometimes have to challenge the status quo to improve their systems, as that was definitely my experience across...

Time tracking gets a bad rap. It’s easy to forget, adds extra steps to an already busy schedule, and often feels like micromanagement. But hear me out—when used strategically, it can be a game-changer for engineering teams.

Let me be clear: I’m not suggesting that you track every minute of your day. In knowledge work, a lot of time is spent in thinking and discussion. Engineers should be assessed by the impact they deliver, not...

Another appearance on the Slight Reliability Podcast! This time we go over the basics of CI/CD, change management, my experience running a Change Advisory Board(CAB), testing in prod, and how to treat your test/deploy infrastructure!

Since you are reading this post, I am sure that you can relate to the classic plight of the IT, sysadmin, or Operations team: They are invisible until things go wrong.

For practitioners of DevOps and Site Reliability Engineering, that can also be true, especially for teams where the low-hanging fruit has already been addressed.

When the big outage happens, it’s all too common for management to have the kneejerk reaction to ask questions like...

Last time I covered several tips on how to launch and operate a Drone CI installation. As promised, I will now reveal my hard-earned secrets on how to build, configure, and monitor DroneCI pipelines!

This assumes pipelines using the Docker runner which is the common use case (and the most useful!)

Yes, this is implicit with the use of the Docker runner, however, take a...

In a previous post, I explored why Jenkins should no longer be the default choice for CI/CD for new software projects. This time, let’s discuss an alternative that I’ve gotten quite familiar with recently: Drone CI.

Drone is simply described as a ‘self-service Continuous Integration platform for busy development teams’. Configuring a CI pipeline is as simple as activating the repo in the web UI and committing a .drone.yml file in the project’s...

I return to the Slight Reliability Podcast to discuss my experience in Meta’s Production Engineering… and tell a story about how I almost burnt down a server room early in my career! Don’t miss this one!

Configuration management is an essential competency when running production systems. It enables you to define the intended state of your servers as code rather than through manual effort- saving a lot of time in the process.

Throughout my career, I’ve used Ruby-based configuration management tools like Puppet or Chef- however recently I have started to use Ansible for client projects.

Ansible is accessible to newcomers as:

Jenkins is an ‘open source automation server’ commonly used for Continuous Integration and Continuous Delivery of software projects by many tech companies. It was first released in 2005 when it was originally known as Hudson. Its large collection of available plugins (~1800!), particularly Pipeline, enables teams to automate common operations tasks, particularly building, testing, and releasing.

All of that being said: it’s time to consider alternatives, especially for new software projects. This article outlines the...

An important process when running a production system is automating manual tasks. This is especially important for fast-growing companies as the engineering team’s time can easily be eaten up by the toil involved in incident response, testing/releasing, etc.- preventing them from implementing the features and improvements that enable further growth and revenue.

It is possible for a product to be a victim of its own success. Don’t let this happen to you!

This article provides...

Another podcast! This week I’m a guest on All Things Ops from CheckMK!

(I used CheckMK years ago as it provided an improved interface and plugin system over stock Nagios.)

Host Elias Voelker and I discussed:

One of my most...

I’m starting a new series where I share my experiences exploring cloud-native/platform engineering tools and technologies. starting with building the foundation: a Kubernetes installation in the cloud.

Why am I doing this?

Today’s mission: get a simple Kubernetes cluster online in the cloud, using infrastructure-as-code! Since I’m an...

This article introduces Kanban(看板), a very effective process for organizing your team’s work and driving improvements, especially if you are on an interrupt-driven team such as Site Reliability Engineering, Operations, IT, or Customer Support.

The essential part of the process is the kanban board, which consists of cards representing each work item. Cards are moved between columns representing the state that the work item is in usually from left to right, such as:

I’m continuing my tour as a guest on tech podcasts! This time I’m on the Day Two Cloud podcast from Packet Pushers which focuses on the realities of cloud adoption.

I really enjoyed the conversation with hosts Ned Bellavance and Ethan Banks, who were both very insightful and funny!

Don’t miss this one as it was an action-packed discussion! Together, we covered:

Let’s try something new and recall one of my most memorable production incidents!

Earlier in my career, I managed an operations team at a medium-sized tech company. The main revenue-generating product consisted of thousands of EC2 instances, all depending on Puppet for configuration management.

Puppet, unlike recent CM systems like Ansible, used a centralized server to store config manifests and required authentication in order to apply them to clients. We configured our hosts...

Another podcast guest appearance! This time I’m on the Slight Reliability podcast, which answers “what is site reliability engineering (SRE) really about?”.

(I’m on the road this week! Next week we’ll return to our usually-scheduled articles.)

In this episode, host Stephen Townshend and I cover a lot of ground including making ops work visible, measuring toil, the power of calculating the monetary value of work, getting developers on-call, the embedded model for SRE, SLOs,...

This week I’m a guest on the Practical Operations podcast, which focuses on “systems, operations and scaling with a focus on real world use cases and solutions to common problems”.

We discuss my experience in DevOps transformations, running a Site Reliability Engineering team, and my experience as a consultant!

I highly recommend following this podcast as the hosts are very knowledgeable and are really entertaining to listen to!

...

When operating large-scale production systems, we rely on infrastructure-as-code (IaC) to keep the state of servers and cloud resources consistent over time. We also benefit from observability platforms to automatically gather metrics from these resources.

However, there are times when both of these essential mechanisms are rendered ineffective during a production incident. How can an engineer troubleshoot and implement a fix successfully across a large number of hosts?

Enter the parallel distributed shell.

A parallel...

The most valuable and impactful work is done through others and not through the strivings of just one person. In the tech industry, creating customer value is a really complicated process and involves the efforts of different people, teams, and perspectives.

Consider a SaaS company: in order for it to be successful, groups like Engineering, Customer Success, Sales, Marketing, and Finance all need to exist and work together in tandem to create a product that...

This article continues the discussion on how your team can learn from failure after a production incident. While write-ups are very important in capturing and documenting what took place, the real value is created from an open and deliberate conversation with the team to identify the lessons learned and the improvements needed to create a more reliable system. That conversation is the post-mortem.

Post-mortems are the primary mechanism for teams to learn from failure....

When is an incident considered ‘done’? Is it when the production impact has been addressed and the on-call goes back to bed? If that were true, teams would pass up a huge opportunity to learn and improve from what the incident can teach them, and the on-call (and more importantly, customers) would continue to have a sub-par experience from repeat incidents.

This post discusses the importance and process of the write-up, which is documenting an...

This article explains why practices like DevOps and Site Reliability Engineering are essential for a successful technology business. Sure, they are touted as a way to change company culture and improve collaboration between teams, but what specific business value should you expect from investing in these capabilities?

Let’s start by remembering the goal of every business:

to make money by increasing throughput while simultaneously reducing inventory and operational expense.

In software development, let’s clarify the...

In our Incident Management series, we’ve talked about how mature monitoring, escalation policies, and alerting enable a swift response when things go wrong in production. Let’s now talk about the people and processes that actually do the responding: the on-call rotation.

Simply put, an on-call rotation is a group of people that share the responsibility of being available to respond to emergencies on short notice, typically on a 24/7 basis. This practice...

Our Incident Management series discussed so far the importance of monitoring and a solid escalation policy in the swift detection of production issues. Both of them depend on a third capability that we will go over today: alerting.

Alerting notifies the engineering team to appropriately and timely respond to problems in production based on their severity.

They tend to fall into three categories:

Last time in our Incident Management series we discussed how monitoring is essential to responding quickly when things go wrong with your app’s availability or performance.

However, monitoring won’t be able to successfully detect every failure. That is especially true for newly-launched services where monitoring is based on theory and not experience.

How do you prepare for the situation where another team or even a customer (heaven forbid) reports a production issue?

The answer...

When running a production system, one of the main responsibilities is being able to respond when things go wrong. This is especially important for newly-launched or rapidly-changing systems where incidents are guaranteed, usually due to defect leakage or performance/scaling challenges.

Incident readiness typically involves the following capabilities:

Imagine: Your team has designed and developed the initial version of an amazing product with market fit, and you wish to offer it to paying customers as soon as possible. It’s time to prepare for launch!

Product launches exist on a razor’s edge between excitement and terror. They very much depend on first impressions that customers get when using your product:

I believe we’re entering a golden age of observability- we can gather metrics from our applications and infrastructure, better interpret them with query languages and pretty dashboards, and get notifications in chatrooms and our oncall systems. All of this technology at our fingertips- without any software licensing fees!

The challenge I see with these new tools is that they tend to assume ‘cloud-native’ infrastructure- the happy path for setup and configuration usually requires a container...

When we discuss useful tools in the DevOps and SRE space, we tend to speak in terms of technology (eg: observability, configuration management, container orchestration, CI/CD). These tools enable us to be successful by introducing reliability and efficiency to the systems that support our products.

They are ubiquitous; discussed in places like Hacker News, supported by large communities, have meetups and conferences, and the enterprise versions are aggressively sold and advertised… even in unlikely places...

Last time I shared my thoughts on blameless postmortems and how they create a safe space for revealing process and technology gaps contributing to past incidents.

Today I want to introduce another opportunity for teams to learn and improve from: the ‘oncall retrospective’, which:

I was introduced to this practice by Jos Visser while...

Does your team conduct postmortems as part of their incident response process? It’s a great way to learn from failure and find opportunities to make your systems more reliable.

One piece of advice: make sure they are BLAMELESS.

This creates an environment of psychological safety, enabling your team to be more forthcoming about the factors that triggered or contributed to the incident- allowing them to be tracked and addressed.

In contrast, if your team feels...